|

We need to do is use an inverse transformation. This is due to not having a one-to-one map of pixels. This seems to get most of the lines in the paving right. The above algorithm seems to get the geometry right using an image of buckingham palace. The cubeToImg takes the (x,y,z) coordinates and translates them to the output image coordinates. The projection function takes the theta and phi values and returns coordinates in a cube from -1 to 1 in each direction. (x,y) = (int(edge*(3-coords)/2), int(edge*(5-coords)/2) )Įdge = inSize/4 # the length of each edge in pixels # edge is the length of an edge of the cube in pixels # coords is a tuple with the side and x,y,z coords

# (a sin θ cos ø, a sin θ sin ø, a cos θ) = (x,y,1) Mathematically, take polar coordinates r, θ, ø, for the sphere r=1, 0 1 or tan θ 2.527:Įlif phi > pi/4 and phi 3*pi/4 and phi 5*pi/4 and phi 1: Now projecting from the point at center of the sphere produces a distorted grid on the cube. Imaging a sphere with lines of latitude and longitude on it, and a cube surrounding it. This gives us a clue as to how the box is constructed. We can try and reverse engineer quite what is happening by feeding a square grid into the program. It's hard to tell quite what algorithm is used in the program. You could put the command to do this into a script and just run that each time you have a new image.

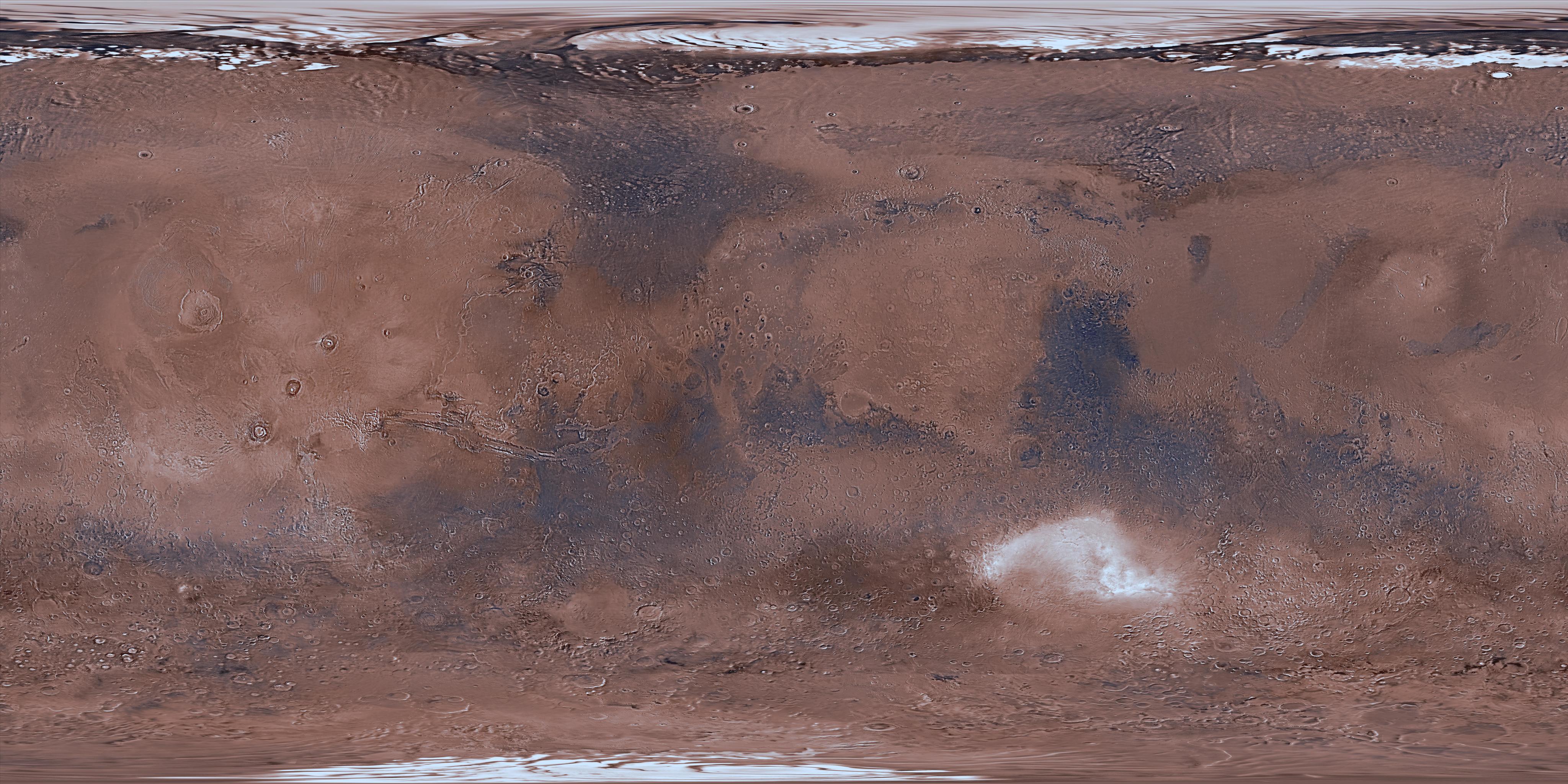

ImageMagick has a bunch of command-line tools which could slice your image into pieces. If you want to do it server side there are many options. I'm also hesitant to rely on the WebGL functionality, since it seems either slow or buggy on mobile devices. I have okay experience with JavaScript, but I'm pretty new to Three.js. Maybe this is a long shot, but I thought I'd ask. Is there a mathematical way to convert these, or some sort of script that does it? I'd like to avoid going through a 3D application like Blender, if possible. I found Andrew Hazelden's Photoshop actions, and they seem kind of close, but no direct conversion is available. Preferably, I'd like to do the conversion myself, either on the fly in Three.js, if that's possible, or in Photoshop. I then resize and convert these to a cubemap with this website: (Flash) I'm recording the images on the iPhone with Google Photo Sphere, or similar apps that create 2:1 equirectangular panoramas. This requires a cube map, split up into six single images. For mobile performance reasons I'm using the Three.js CSS 3 renderer. leads me to believe there is something wrong with the object itself.I'm currently working on a simple 3D panorama viewer for a website. Note how distorted north America looks, and Antarctica - there is definite pinching at the poles and a seam where there should be none. So I copy the code to create a custom shader, but it does exactly the same thing (probably no wonder: the code looks suspiciously like Unity's shader template code): So, I dig and dig and dig and dig, for 2 days now, and the only thing I can find is a resource with shader code that implies that it will map correctly to a sphere. to the albedo texture map, and below is the result. I created a new material, select standard, and attach the NASA Earth map you can find everywhere - for example: I'm trying to wrap an image of the earth on a Unity Sphere. It seems the UV coordinates attached to Unity's sphere object must be all wrong, or something, and I find no documentation or online help that seems to address the issue.

Hi all, please excuse my Noobiness, but what is up with Unty's spherical mapping method?

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed